Nano Banana 2 vs GPT Image 2: which model wins for ads, edits, and layouts?

Nano Banana 2 is the faster everyday default for broad image generation and edits, while GPT Image 2 is the newest OpenAI image workflow to test for readable ad layouts, marketplace packshots, editorial compositions, and medium-quality outputs at 1K, 2K, or 4K.

The benchmark focuses on matched prompts and real marketing tasks first, so the first-screen verdict is about output quality, editing behavior, speed, and cost.

Nano Banana 2 vs GPT Image 2 (Quick Summary)

A fast view of where each model wins across generation quality, editing preservation, speed, pricing, and production use cases.

Best default starting point

Nano Banana 2

Fast everyday generation and edits

GPT Image 2

Newest OpenAI workflow for text-heavy production assets

Non-shared formats

Nano Banana 2

4:5, 5:4, 21:9, 4:1, 8:1

GPT Image 2

3:1 and 1:3

Text and ad layouts

Nano Banana 2

Readable, but less finished on text-heavy layouts

GPT Image 2

Wins most text-heavy and layout-heavy tests

Pricing direction

Nano Banana 2

Higher same-size cost than GPT Image 2 medium quality

GPT Image 2

Cheaper medium-quality runs at 1K, 2K, and 4K

High-quality generation speed

Nano Banana 2

Faster in high-quality test runs

GPT Image 2

About 3x slower in high quality mode

Reference-guided edits

Nano Banana 2

Wins all reviewed preservation-heavy edits

GPT Image 2

Strong results, but more source drift in this set

Overall result

Nano Banana 2

Best default for speed, composition, and edits

GPT Image 2

Best for polished text-heavy and production-heavy layouts

HummingBytes Matched-Prompt Benchmark

A side-by-side framework for comparing real marketing outputs with the same prompts, references, and shared canvas when needed.

Each direct benchmark uses the same prompt, source references, and a shared aspect ratio only where needed for a fair side-by-side comparison. The verdicts below focus on prompt adherence, preservation, text accuracy, layout quality, speed, and production usefulness.

- Readable Product Ad Poster

- Marketplace Packshot

- Website Hero Product Launch

- Spatial Logic & Physics

- Restaurant Menu Editorial Layout

- Exact Ticket Text Rendering

- Typography + Poster Composition

- Four-Reference Character Composition

- Six-Person Object Mapping Test

- Painting Style Fidelity (Luminism)

- Minimal Brand Logo Creation

- Recipe Infographic Layout

- Endangered Animal Research Infographic

- Identity-Preserving Background Change

- Reference-Guided Product Background Swap

- Physical Sign Text Replacement

Quick Rule of Thumb

- Start with Nano Banana 2 for fast everyday generation, broad creative iteration, preservation-heavy edits, and ultra-wide 4:1 or 8:1 outputs.

- Test GPT Image 2 when the job is a readable ad layout, marketplace packshot, editorial composition, medium-quality output at 1K, 2K, or 4K, or OpenAI image workflow.

- At the same image size, GPT Image 2 medium quality is cheaper than Nano Banana 2 in HummingBytes and still produces strong results.

- Expect GPT Image 2 high quality mode to be slower; in our high-quality test runs it was roughly three times slower than Nano Banana 2.

Shared-Ratio Generation Tests

These benchmark slots use matched Nano Banana 2 and GPT Image 2 outputs.

Use only shared ratios for clean comparison cards. The expanded set covers product ads, ecommerce, website heroes, exact text, poster typography, multi-reference composition, object mapping, style fidelity, menus, logo generation, and infographic layouts. The current verdicts reflect the reviewed asset set.

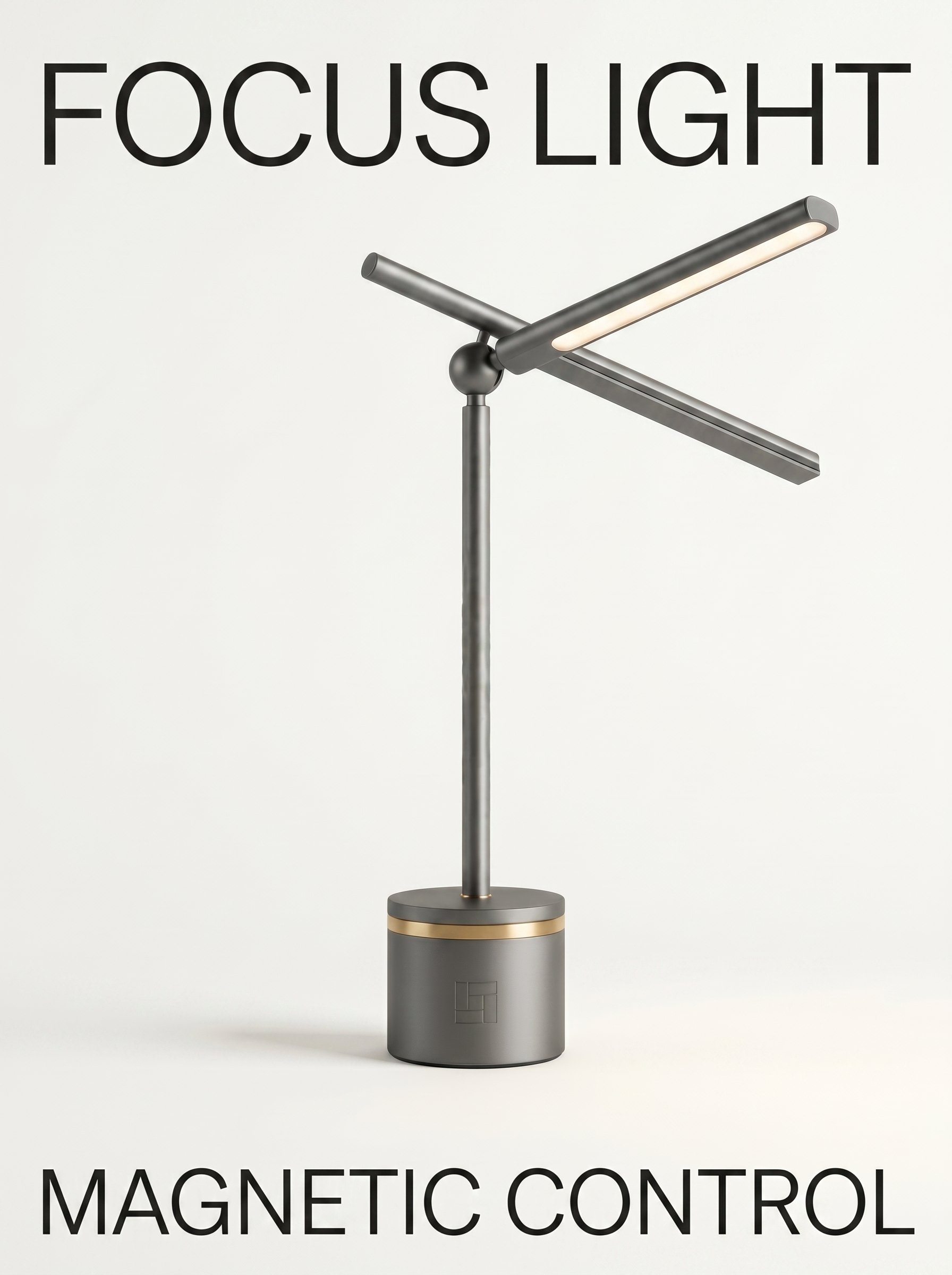

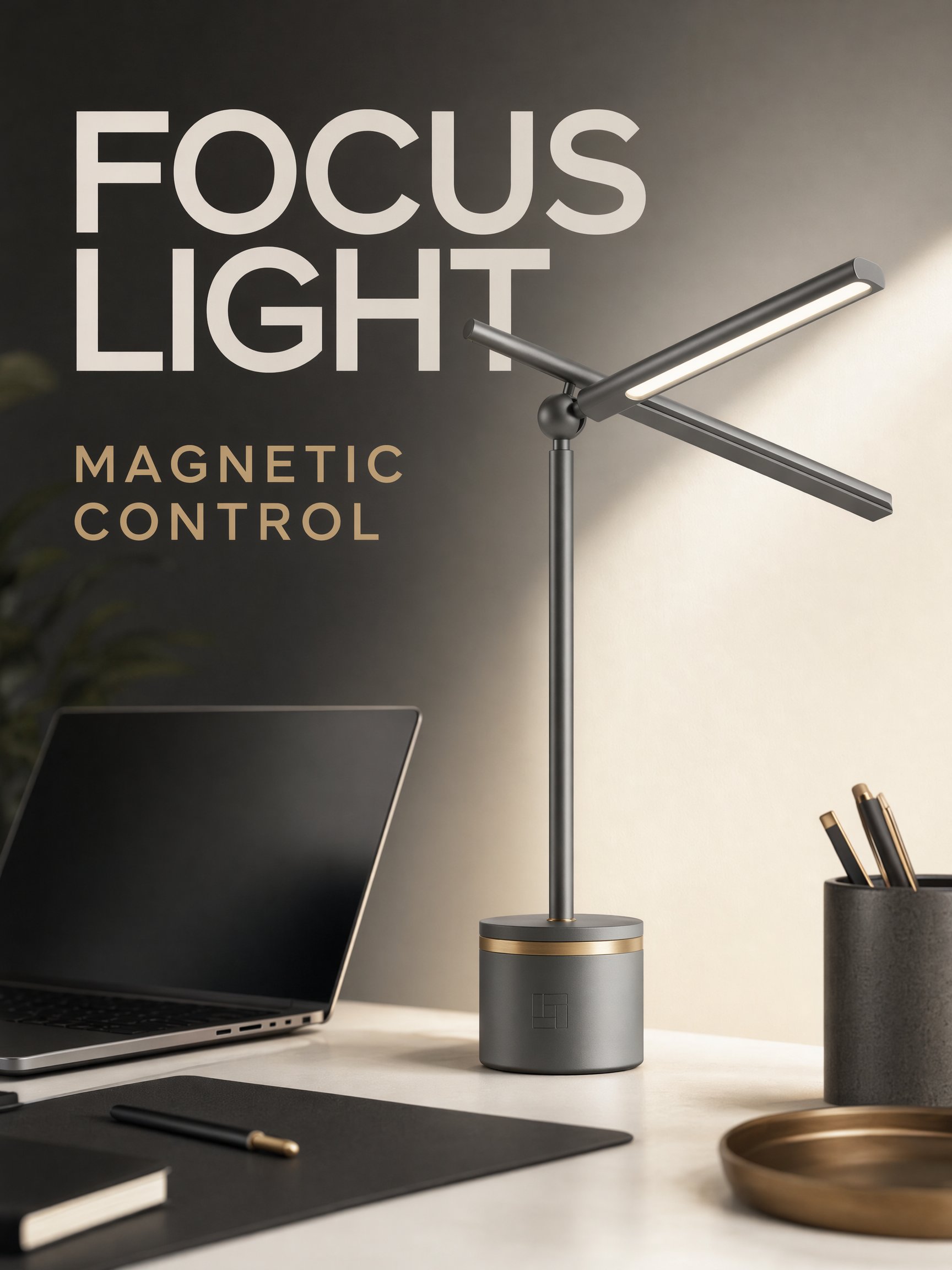

Readable Product Ad Poster

What this test checks

A short-copy advertising test in a shared 3:4 format. This checks whether each model can preserve a referenced product while rendering two exact, readable text lines in a finished commercial layout.

Verdict:

GPT Image 2Why this verdict

GPT Image 2 wins because it delivers the more publishable campaign layout: both requested text lines are readable, the hierarchy is stronger, and the product sits in a finished desk-scene ad. Nano Banana 2 preserves the product cleanly, but it feels more like a simple poster proof.

Reference Images

Marketplace Packshot

What this test checks

A square ecommerce output test. The better result should preserve the product, stay clean enough for product cards, and avoid adding fake branding or distracting props.

Verdict:

GPT Image 2Why this verdict

GPT Image 2 wins narrowly because the lamp is better centered for a marketplace grid, with cleaner margins and a more polished product-card frame. Nano Banana 2 is accurate, but the composition feels less controlled.

Reference Images

Website Hero Product Launch

What this test checks

A shared 16:9 web-placement test. This checks composition discipline: product preservation, realistic finish, and usable negative space for landing page copy.

Verdict:

Nano Banana 2Why this verdict

Nano Banana 2 wins because its result works better as an actual landing-page hero: the lamp sits on the right, the left side has usable negative space, and the warmer desk scene feels ready for page copy. GPT Image 2 is polished, but the composition is less clearly designed around copy placement.

Reference Images

Spatial Logic & Physics

What this test checks

A shared 3:4 reasoning test. The prompt forces each model to understand occlusion and mirror reflection rather than merely generate a plausible bathroom scene.

Verdict:

GPT Image 2Why this verdict

GPT Image 2 wins because it keeps the mirror setup more coherent and photorealistic while placing REAL in the direct view and FAKE in the reflection. Nano Banana 2 captures the core idea, but the body, paper, and reflection logic are less convincing.

Restaurant Menu Editorial Layout

What this test checks

A dense layout test in a shared portrait format. It stresses long-form typography, section hierarchy, menu prices, and whether the model invents extra text.

Verdict:

GPT Image 2Why this verdict

GPT Image 2 wins because the menu feels more premium and editorial, with stronger hierarchy, decorative restraint, and a more complete set of requested sections. Nano Banana 2 is readable, but it is plainer and less refined.

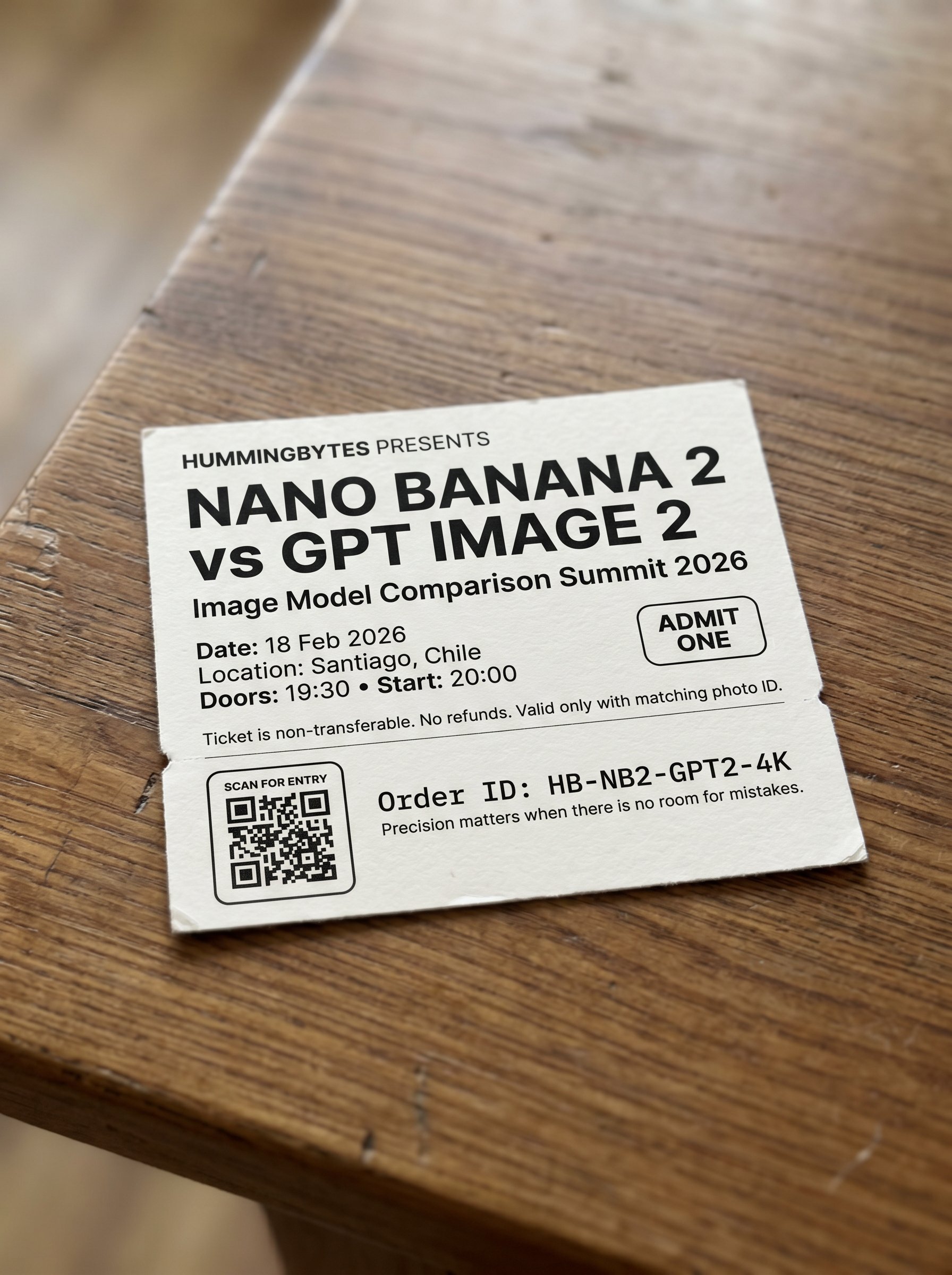

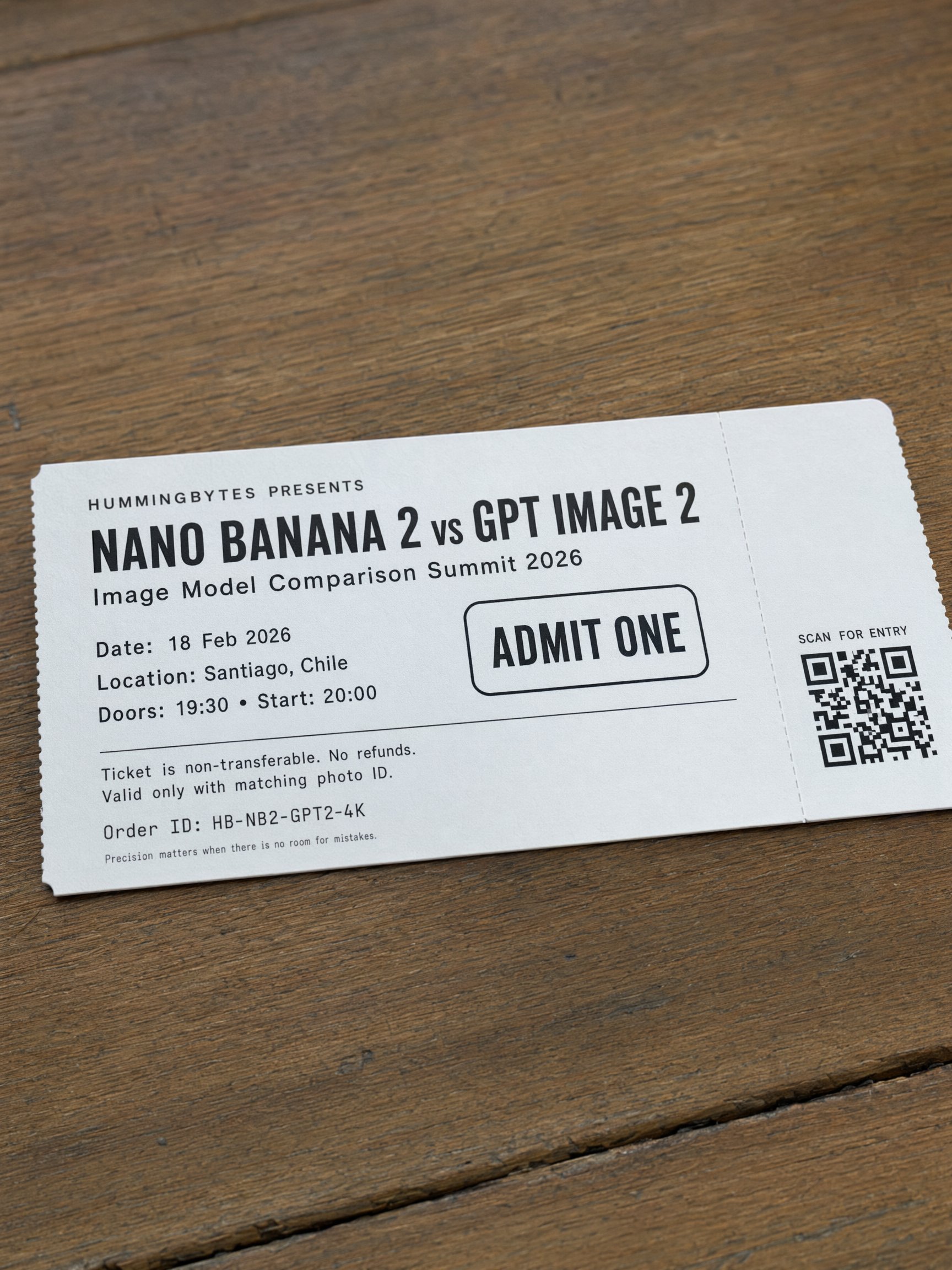

Exact Ticket Text Rendering

What this test checks

A strict text-rendering benchmark using a shared 3:4 printed-ticket prompt. This adds a harder pure typography test beyond the shorter product-ad poster.

Verdict:

GPT Image 2Why this verdict

GPT Image 2 wins because it builds a more complete printed-ticket object with stronger hierarchy, better QR placement, cleaner alignment, and more of the requested small text. Nano Banana 2 gets the main title right, but the ticket structure is less complete.

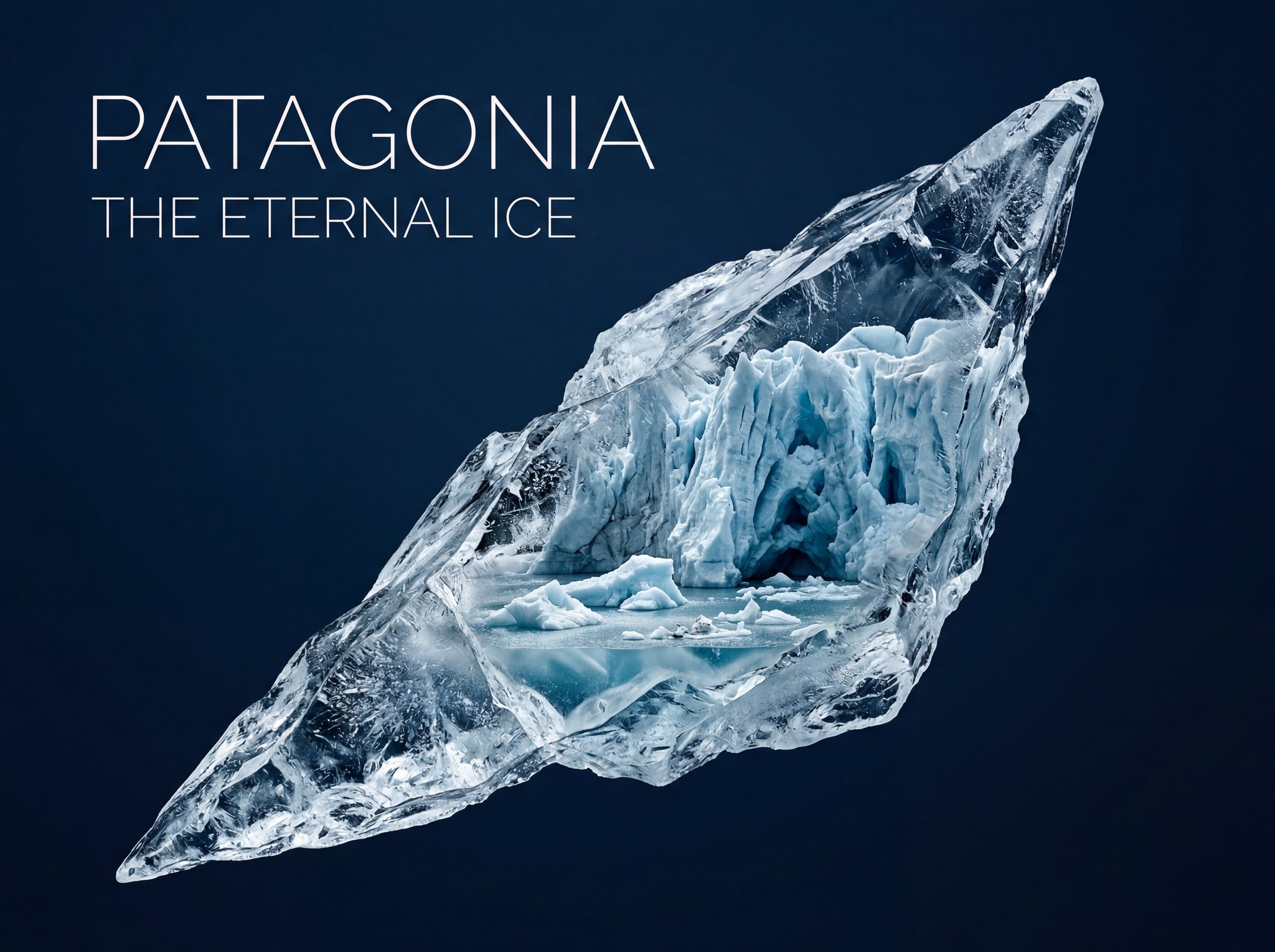

Typography + Poster Composition

What this test checks

A shared 4:3 premium poster test. It checks whether each model can balance exact title hierarchy, negative space, material detail, and restrained art direction.

Verdict:

GPT Image 2Why this verdict

GPT Image 2 wins because the ice shard has a stronger premium poster composition, cleaner negative-space discipline, and a more dramatic internal glacier world. Nano Banana 2 has readable type, but the layout is less controlled.

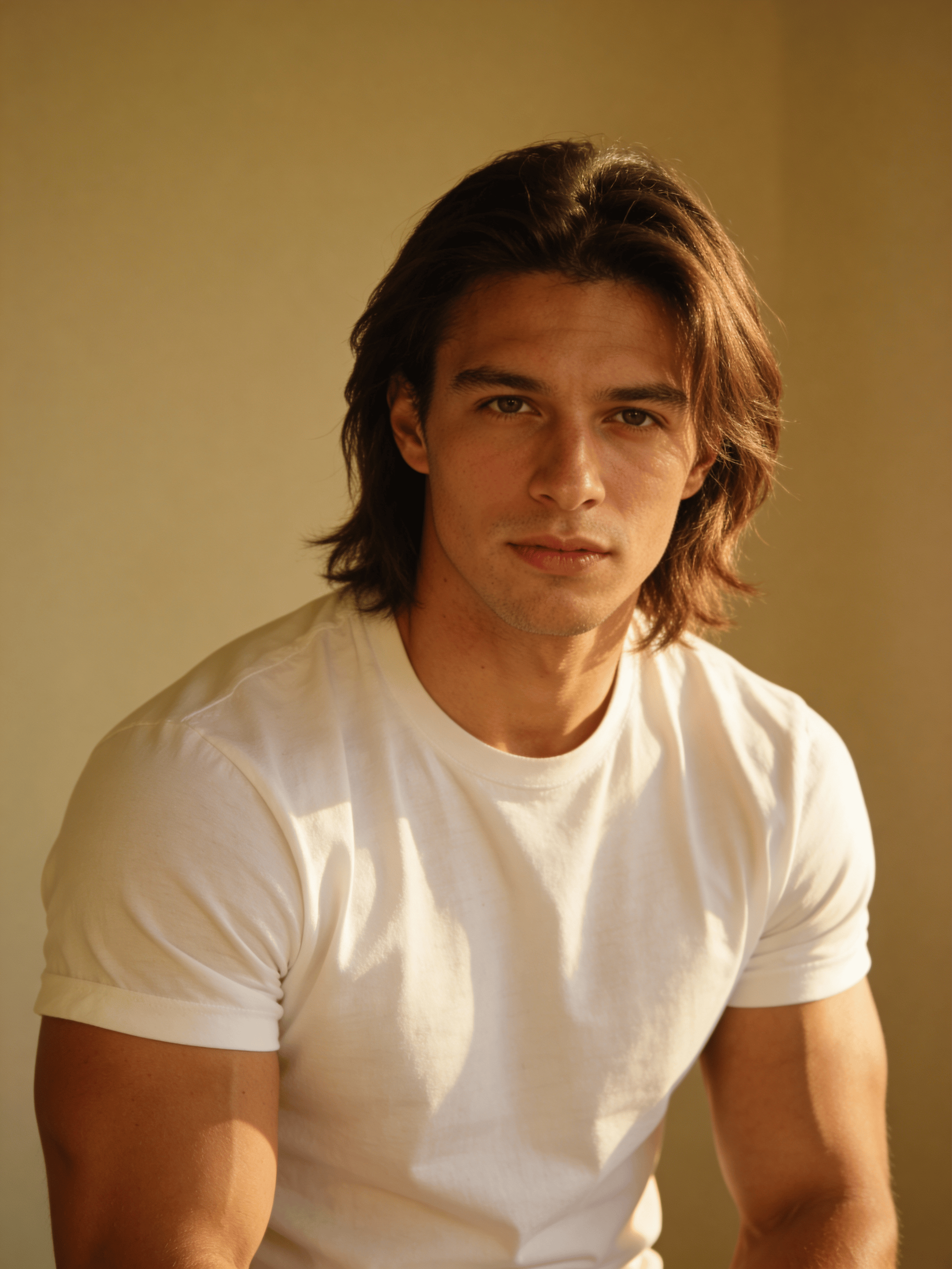

Four-Reference Character Composition

What this test checks

A shared 4:3 multi-reference test. Four separate identities must be preserved while each person performs a different action in one coherent scene.

Verdict:

Nano Banana 2Why this verdict

Nano Banana 2 wins because it preserves the four-subject scene logic more clearly: each person is spatially distinct and the requested actions are easier to read. GPT Image 2 is polished, but the composition is tighter and less diagnostic.

Reference Images

Six-Person Object Mapping Test

What this test checks

The hardest shared-ratio diagnostic on this page: six people, six distinct objects, one scene, and no identity blending or object sharing.

Verdict:

GPT Image 2Why this verdict

GPT Image 2 wins because the group portrait is more polished, faces are more visible, and the six-object mapping mostly stays legible in a tighter commercial composition. Nano Banana 2 follows the inventory well, but the scene feels less publishable.

Reference Images

Painting Style Fidelity (Luminism)

What this test checks

A shared 3:4 style-obedience test. The goal is not generic beauty; it is whether the model can channel luminism through light handling, atmosphere, and tonal restraint.

Verdict:

GPT Image 2Why this verdict

GPT Image 2 wins because the light handling, atmosphere, and central horse composition feel closer to a luminist painting. Nano Banana 2 is attractive, but it reads more cinematic and less tied to the requested painting tradition.

Minimal Brand Logo Creation

What this test checks

A shared 1:1 reduction test. The better output should respect the requested color, keep a simple silhouette, and remain recognizable as a flat logo at small sizes.

Verdict:

Nano Banana 2Why this verdict

Nano Banana 2 wins because the hummingbird mark is cleaner, more elegant, and easier to recognize as a minimal flat-vector logo at small sizes. GPT Image 2 is usable, but the silhouette is bulkier and less refined.

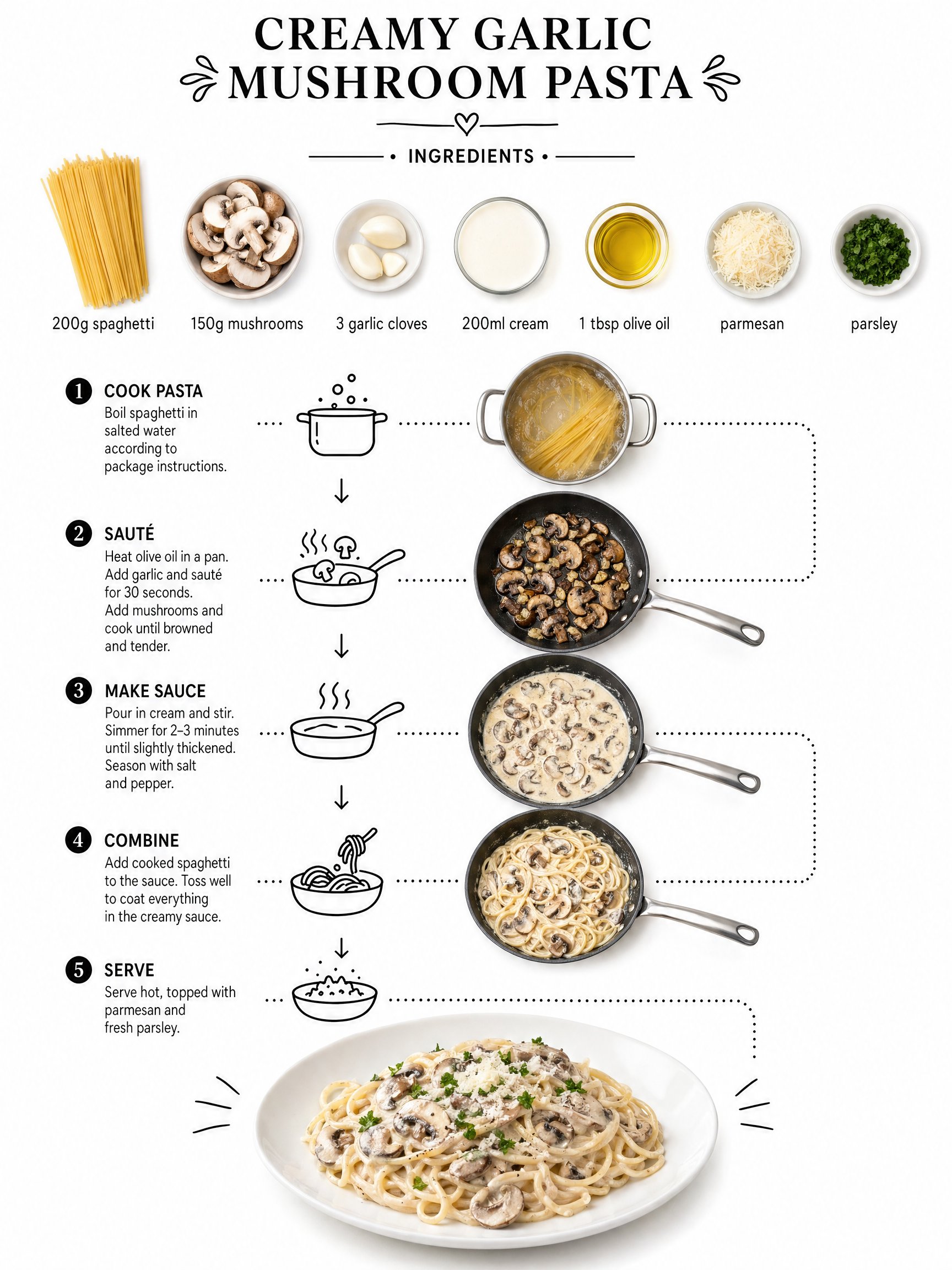

Recipe Infographic Layout

What this test checks

A shared 3:4 infographic test for ingredient labeling, process flow, icon placement, and whether the model can keep food photography and minimal graphic structure readable.

Verdict:

GPT Image 2Why this verdict

GPT Image 2 wins because it creates the stronger infographic: ingredient labels are cleaner, the process flow is clearer, and the final plated dish anchors the composition. Nano Banana 2 is readable, but the flow is more compressed.

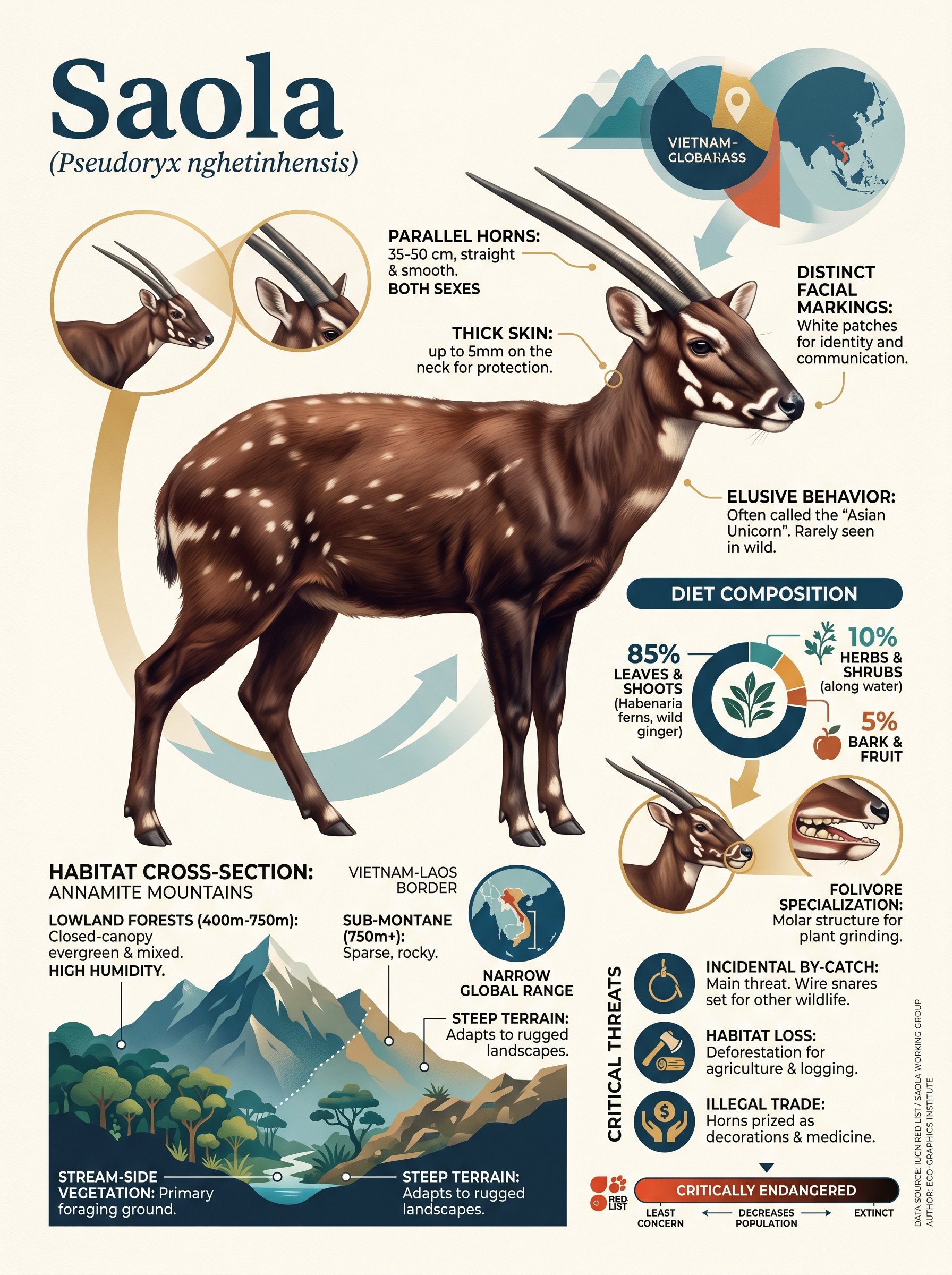

Endangered Animal Research Infographic

What this test checks

A shared 3:4 research-infographic test for factual grounding, dense callouts, diagram composition, and whether the model can blend a photorealistic focal animal with authored graphic structure.

Verdict:

GPT Image 2Why this verdict

GPT Image 2 wins because it better matches the requested dense, professionally authored infographic: the photoreal central animal is stronger, the callouts are richer, and the composition feels more tactile. Nano Banana 2 is informative, but more illustration-led.

Test Nano Banana 2 and GPT Image 2 in one workspace

Run the same prompt through both models, compare preservation, text accuracy, layout quality, speed, and cost, then choose the output that fits your real workflow.

Reference-Guided Editing Tests

Both models receive the same source image and the same instruction for each edit.

These tests focus on whether the model changes only what was requested while preserving product identity, physical materials, framing, and lighting.

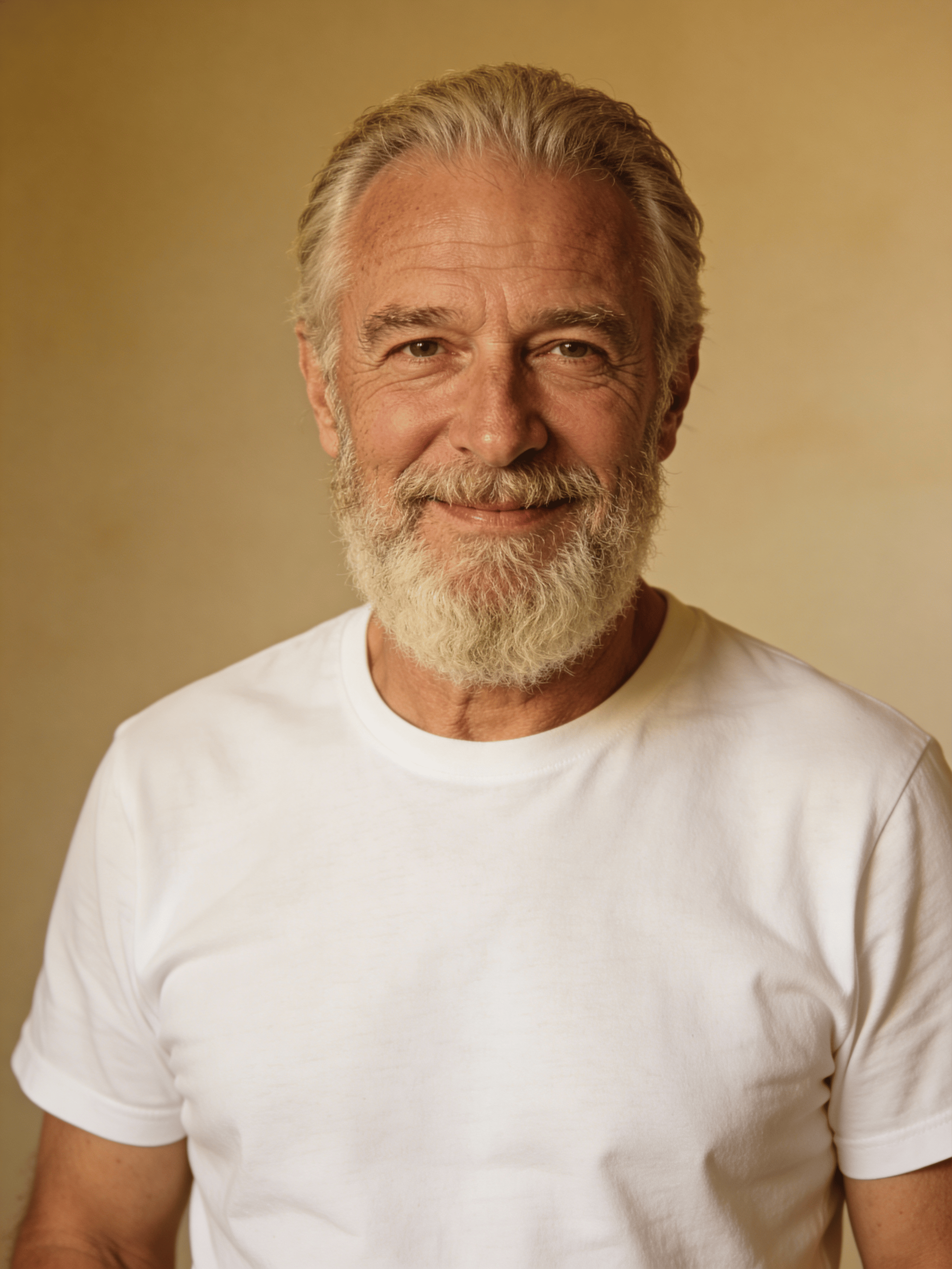

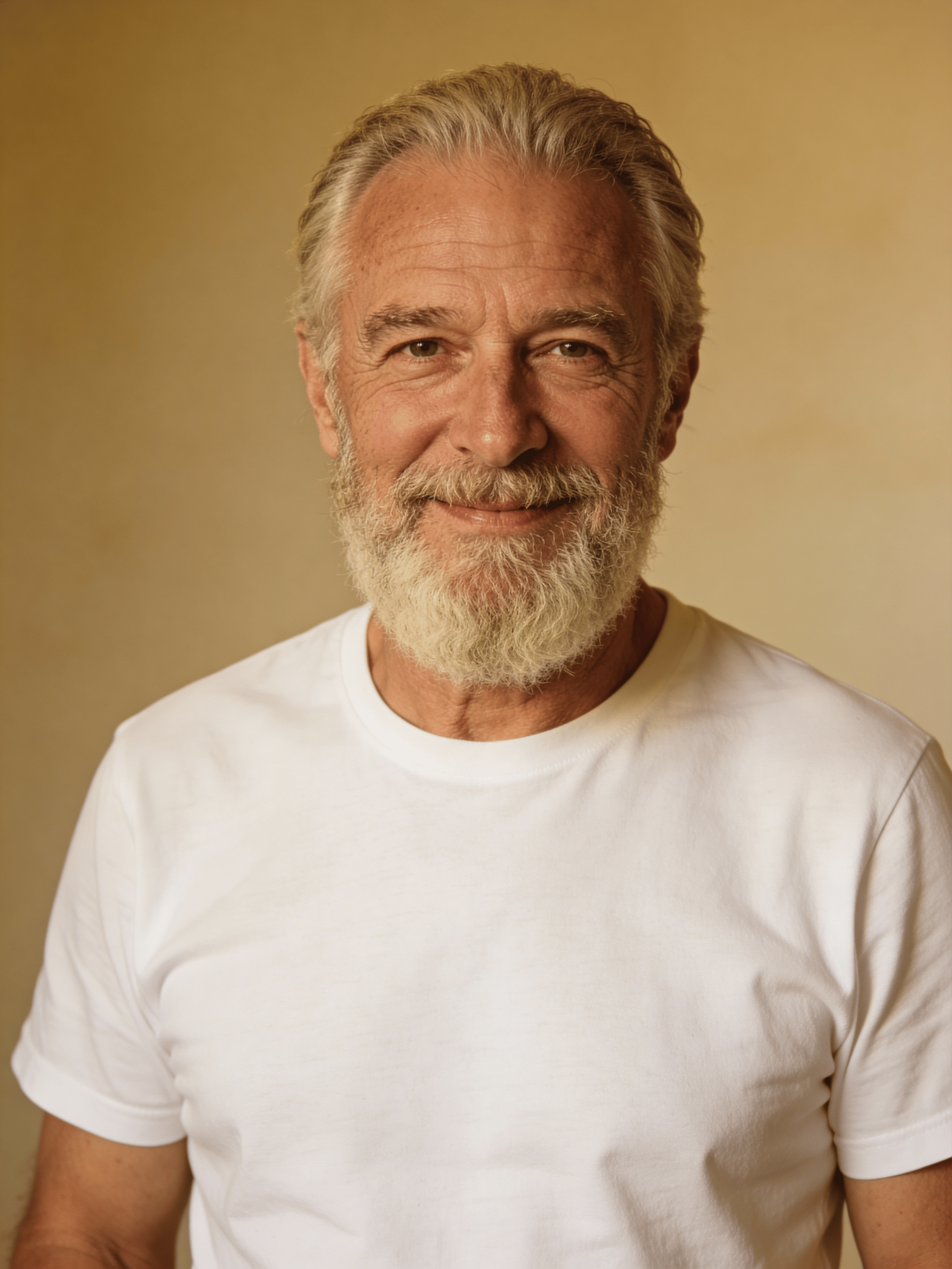

Identity-Preserving Background Change

What this test checks

Both models receive the same portrait and must replace only the environment while keeping the subject intact. This is a practical edit benchmark, not a beauty test.

Verdict:

Nano Banana 2Why this verdict

Nano Banana 2 wins because it changes the background while keeping the source face almost intact. GPT Image 2 produces a polished office portrait, but it alters the face more and shifts the subject positioning slightly.

Compare input vs

Reference-Guided Product Background Swap

What this test checks

Both models receive the same clean camera-sling reference and must change only the environment. This is a practical ecommerce campaign edit, not a generic beauty test.

Verdict:

Nano Banana 2Why this verdict

Nano Banana 2 wins because it preserves the bag size, shape, and material identity almost exactly while delivering the stronger lighting result. GPT Image 2 also does an excellent job, but Nano Banana 2 honors the background-swap goal more directly.

Compare input vs

Physical Sign Text Replacement

What this test checks

This surgical edit checks whether the model can update one word on a real physical sign while preserving framing, materials, and the surrounding scene.

Verdict:

Nano Banana 2Why this verdict

Nano Banana 2 wins because it changes ONLY to ALWAYS while preserving more of the original board framing, material texture, and sidewalk context. GPT Image 2 also performs the edit cleanly, but it crops and reframes the source more aggressively.

Compare input vs

Nano Banana 2 is the fast everyday default

Fast iteration · strong edits · more Google-specific format options

Nano Banana 2 should remain the first model to test when the workload is exploratory, high-volume, preservation-heavy, or format-led. It also has several non-shared aspect ratios that are useful for banners and wide creative.

- Strong shared-ratio generation and editing workflow.

- Won all reviewed preservation-heavy editing tests.

- Exclusive 4:1 and 8:1 formats in HummingBytes.

- Better starting point when generation speed matters.

GPT Image 2 is the newest OpenAI text-and-layout production test

Flexible sizes · 1K/2K/4K medium quality · text-heavy and ecommerce layouts

GPT Image 2 should be tested when the output needs to behave like a finished commercial layout: a marketplace packshot, social ad, readable poster, editorial design, or exact-size channel deliverable. Its medium-quality path can produce strong results at 1K, 2K, and 4K while costing fewer credits than Nano Banana 2 at the same image size.

- Good fit for polished campaign and ecommerce assets.

- Medium quality can produce strong 1K, 2K, and 4K results at lower same-size credit cost in HummingBytes.

- Supports GPT Image 2-specific 3:1 and 1:3 formats.

- High quality mode can be meaningfully slower, so reserve it for outputs where polish is worth the wait.

- Useful when OpenAI image generation is part of the workflow requirement.